Automation has become so sophisticated that on a typical passenger flight, a human pilot holds the controls for a grand total of just three minutes. What pilots spend a lot of time doing is monitoring screens and keying in data. They’ve become, it’s not much of an exaggeration to say, computer operators.

And that, many aviation and automation experts have concluded, is a problem. Overuse of automation erodes pilots’ expertise and dulls their reflexes, leading to what Jan Noyes, an ergonomics expert at Britain’s University of Bristol, terms “a de-skilling of the crew.” No one doubts that autopilot has contributed to improvements in flight safety over the years. It reduces pilot fatigue and provides advance warnings of problems, and it can keep a plane airborne should the crew become disabled. But the steady overall decline in plane crashes masks the recent arrival of “a spectacularly new type of accident,” says Raja Parasuraman, a psychology professor at George Mason University and a leading authority on automation. When an autopilot system fails, too many pilots, thrust abruptly into what has become a rare role, make mistakes.

The experience of airlines should give us pause. It reveals that automation, for all its benefits, can take a toll on the performance and talents of those who rely on it. The implications go well beyond safety. Because automation alters how we act, how we learn, and what we know, it has an ethical dimension. The choices we make, or fail to make, about which tasks we hand off to machines shape our lives and the place we make for ourselves in the world. That has always been true, but in recent years, as the locus of labor-saving technology has shifted from machinery to software, automation has become ever more pervasive, even as its workings have become more hidden from us. Seeking convenience, speed, and efficiency, we rush to off-load work to computers without reflecting on what we might be sacrificing as a result.

[…]

A hundred years ago, the British mathematician and philosopher Alfred North Whitehead wrote, “Civilization advances by extending the number of important operations which we can perform without thinking about them.” It’s hard to imagine a more confident expression of faith in automation. Implicit in Whitehead’s words is a belief in a hierarchy of human activities: Every time we off-load a job to a tool or a machine, we free ourselves to climb to a higher pursuit, one requiring greater dexterity, deeper intelligence, or a broader perspective. We may lose something with each upward step, but what we gain is, in the long run, far greater.

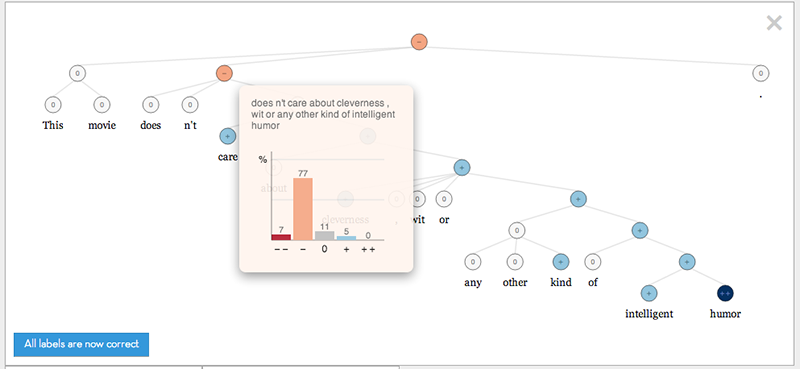

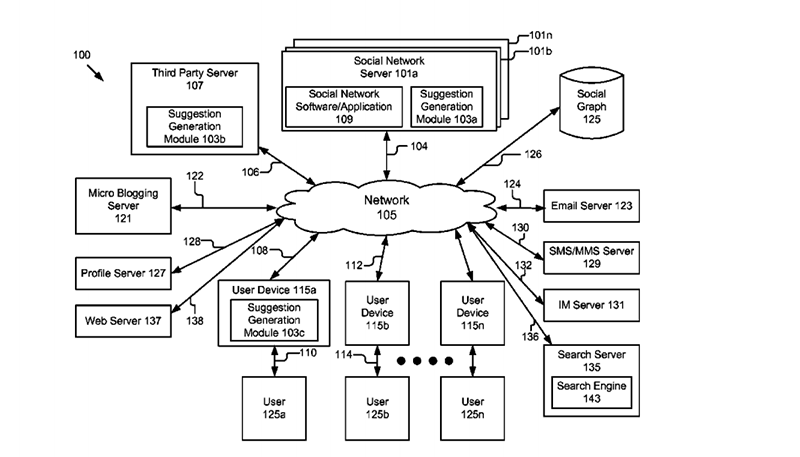

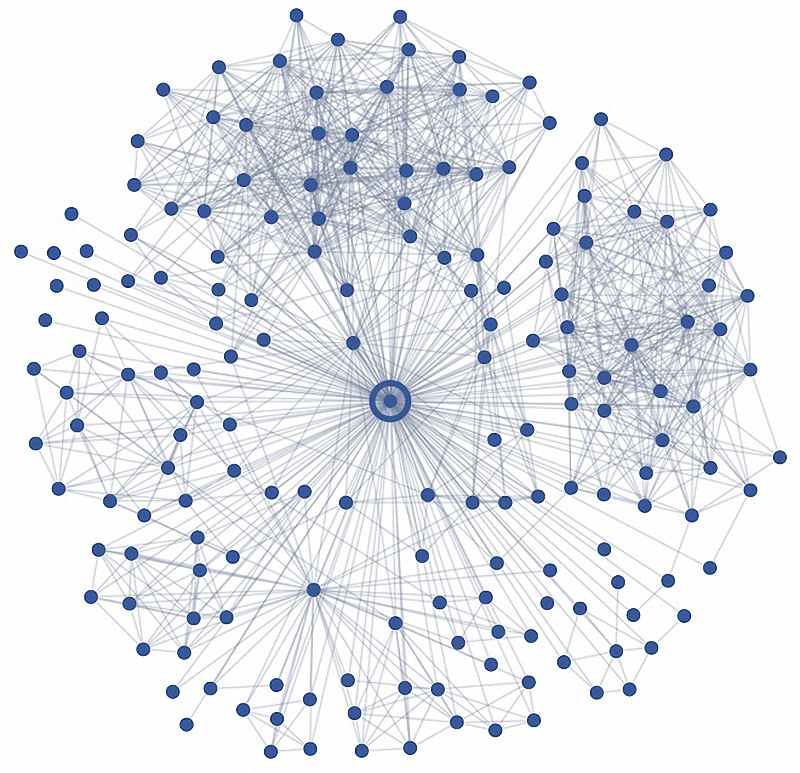

History provides plenty of evidence to support Whitehead. We humans have been handing off chores, both physical and mental, to tools since the invention of the lever, the wheel, and the counting bead. But Whitehead’s observation should not be mistaken for a universal truth. He was writing when automation tended to be limited to distinct, well-defined, and repetitive tasks—weaving fabric with a steam loom, adding numbers with a mechanical calculator. Automation is different now. Computers can be programmed to perform complex activities in which a succession of tightly coordinated tasks is carried out through an evaluation of many variables. Many software programs take on intellectual work—observing and sensing, analyzing and judging, even making decisions—that until recently was considered the preserve of humans. That may leave the person operating the computer to play the role of a high-tech clerk—entering data, monitoring outputs, and watching for failures. Rather than opening new frontiers of thought and action, software ends up narrowing our focus. We trade subtle, specialized talents for more routine, less distinctive ones.

Most of us want to believe that automation frees us to spend our time on higher pursuits but doesn’t otherwise alter the way we behave or think. That view is a fallacy—an expression of what scholars of automation call the “substitution myth.” A labor-saving device doesn’t just provide a substitute for some isolated component of a job or other activity. It alters the character of the entire task, including the roles, attitudes, and skills of the people taking part. As Parasuraman and a colleague explained in a 2010 journal article, “Automation does not simply supplant human activity but rather changes it, often in ways unintended and unanticipated by the designers of automation.”

Psychologists have found that when we work with computers, we often fall victim to two cognitive ailments—complacency and bias—that can undercut our performance and lead to mistakes. Automation complacency occurs when a computer lulls us into a false sense of security. Confident that the machine will work flawlessly and handle any problem that crops up, we allow our attention to drift. We become disengaged from our work, and our awareness of what’s going on around us fades. Automation bias occurs when we place too much faith in the accuracy of the information coming through our monitors. Our trust in the software becomes so strong that we ignore or discount other information sources, including our own eyes and ears. When a computer provides incorrect or insufficient data, we remain oblivious to the error.

Examples of complacency and bias have been well documented in high-risk situations—on flight decks and battlefields, in factory control rooms—but recent studies suggest that the problems can bedevil anyone working with a computer. Many radiologists today use analytical software to highlight suspicious areas on mammograms. Usually, the highlights aid in the discovery of disease. But they can also have the opposite effect. Biased by the software’s suggestions, radiologists may give cursory attention to the areas of an image that haven’t been highlighted, sometimes overlooking an early-stage tumor. Most of us have experienced complacency when at a computer. In using e-mail or word-processing software, we become less proficient proofreaders when we know that a spell-checker is at work.

[…]

Who needs humans, anyway? That question, in one rhetorical form or another, comes up frequently in discussions of automation. If computers’ abilities are expanding so quickly and if people, by comparison, seem slow, clumsy, and error-prone, why not build immaculately self-contained systems that perform flawlessly without any human oversight or intervention? Why not take the human factor out of the equation? The technology theorist Kevin Kelly, commenting on the link between automation and pilot error, argued that the obvious solution is to develop an entirely autonomous autopilot: “Human pilots should not be flying planes in the long run.” The Silicon Valley venture capitalist Vinod Khosla recently suggested that health care will be much improved when medical software—which he has dubbed “Doctor Algorithm”—evolves from assisting primary-care physicians in making diagnoses to replacing the doctors entirely. The cure for imperfect automation is total automation.